A global race to develop entirely autonomous weapon-systems, powered by AI, is on the brink of turning into a full-blown arms race: as predicted by a recent report from a Dutch peace group.

Tech-giants, namely Amazon, Microsoft, and Intel, among others, are most likely to be spearheading a worldwide AI arms race, affirms a recent report by PAX, a Dutch anti-war NGO. The report is based on a study that surveyed the most influential companies in today’s technology sector in order to identify their stances on lethal autonomous weapons.

The final report of this study has ranked as many as 50 organizations primarily based on three criteria: whether they have been developing any sort of technology that could be relevant to killer robots, whether they have been involved with military or related projects, and, also, if they have resolved to abstain from developing such technologies in the future. The report, after reviewing the activities of these 50 technology companies, suggests that the activities of 21 among them should be treated with “higher concern” considering their products’ implications towards the development of lethal autonomous weapons. Notably, among these are Amazon and Microsoft who are both reportedly bidding for a $10 billion Pentagon contract that would provide cloud infrastructure to the US military.

PAX’s investigation on autonomous weapons strongly argues that certain innovations in commercial AI, especially in the fields of facial recognition, ground robotics, and system integration, may have deep implications for the defense sector. Frank Slijper, the lead author of the aforesaid report, states, “Why are companies like Microsoft and Amazon not denying that they’re currently developing these highly controversial weapons, which could decide to kill people without direct human involvement?”

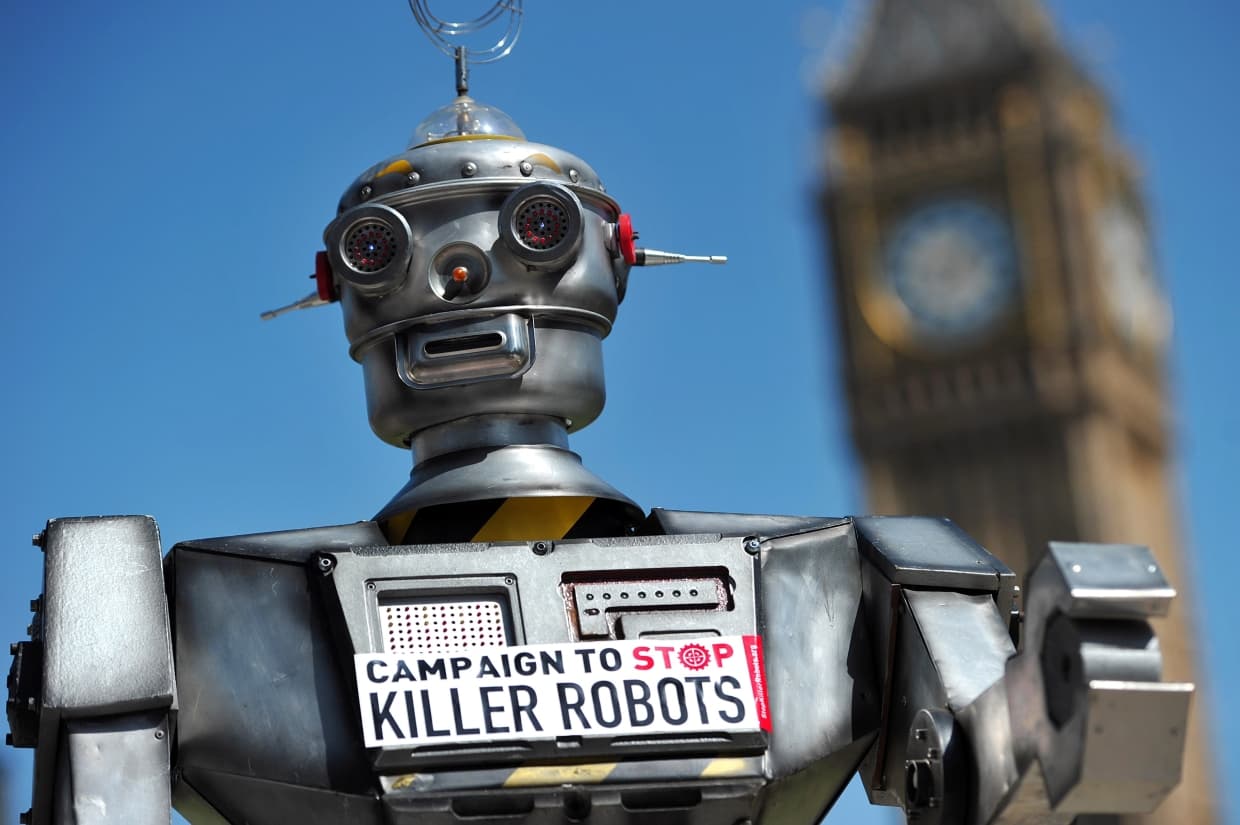

Lethal autonomous weapons, or “killer robots”, as they are described by PAX, have sparked a number of ethical debates in recent years. To delve deeper, these weapons are designed to autonomously select and engage targets with zero human intervention, or even control. On their own, these machines are even “unlikely to comply with the laws of warfare,” according to PAX.

AI-experts believe, their advent could certainly be called the “third revolution in warfare”—a successor to the invention of gunpowder and the creation of nuclear bombs. Any act of development of such technologies could even jeopardize matters of international security, critics warn.

“Lethal autonomous weapons raise abundant legal, security, as well as ethical concerns,” the PAX report says. As it further goes clarifying ways in which it could be immensely unethical to delegate the decision “over life and death” to a machine merely guided by a bunch of algorithms. PAX also foresees an “accountability vacuum” pertaining to improper or illegal acts.